|

| "DaskXGBClassifier" | fit (self, _DataT X, _DaskCollection y, *Optional[_DaskCollection] sample_weight=None, Optional[_DaskCollection] base_margin=None, Optional[Sequence[Tuple[_DaskCollection, _DaskCollection]]] eval_set=None, Optional[Union[str, Sequence[str], Callable]] eval_metric=None, Optional[int] early_stopping_rounds=None, Union[int, bool] verbose=True, Optional[Union[Booster, XGBModel]] xgb_model=None, Optional[Sequence[_DaskCollection]] sample_weight_eval_set=None, Optional[Sequence[_DaskCollection]] base_margin_eval_set=None, Optional[_DaskCollection] feature_weights=None, Optional[Sequence[TrainingCallback]] callbacks=None) |

| |

|

Any | predict_proba (self, _DaskCollection X, bool validate_features=True, Optional[_DaskCollection] base_margin=None, Optional[Tuple[int, int]] iteration_range=None) |

| |

| Any | predict (self, _DataT X, bool output_margin=False, bool validate_features=True, Optional[_DaskCollection] base_margin=None, Optional[Tuple[int, int]] iteration_range=None) |

| |

| Any | apply (self, _DataT X, Optional[Tuple[int, int]] iteration_range=None) |

| |

|

Awaitable[Any] | __await__ (self) |

| |

|

Dict | __getstate__ (self) |

| |

| "distributed.Client" | client (self) |

| |

|

None | client (self, "distributed.Client" clt) |

| |

|

None | __init__ (self, Optional[int] max_depth=None, Optional[int] max_leaves=None, Optional[int] max_bin=None, Optional[str] grow_policy=None, Optional[float] learning_rate=None, Optional[int] n_estimators=None, Optional[int] verbosity=None, SklObjective objective=None, Optional[str] booster=None, Optional[str] tree_method=None, Optional[int] n_jobs=None, Optional[float] gamma=None, Optional[float] min_child_weight=None, Optional[float] max_delta_step=None, Optional[float] subsample=None, Optional[str] sampling_method=None, Optional[float] colsample_bytree=None, Optional[float] colsample_bylevel=None, Optional[float] colsample_bynode=None, Optional[float] reg_alpha=None, Optional[float] reg_lambda=None, Optional[float] scale_pos_weight=None, Optional[float] base_score=None, Optional[Union[np.random.RandomState, int]] random_state=None, float missing=np.nan, Optional[int] num_parallel_tree=None, Optional[Union[Dict[str, int], str]] monotone_constraints=None, Optional[Union[str, Sequence[Sequence[str]]]] interaction_constraints=None, Optional[str] importance_type=None, Optional[str] device=None, Optional[bool] validate_parameters=None, bool enable_categorical=False, Optional[FeatureTypes] feature_types=None, Optional[int] max_cat_to_onehot=None, Optional[int] max_cat_threshold=None, Optional[str] multi_strategy=None, Optional[Union[str, List[str], Callable]] eval_metric=None, Optional[int] early_stopping_rounds=None, Optional[List[TrainingCallback]] callbacks=None, **Any kwargs) |

| |

|

bool | __sklearn_is_fitted__ (self) |

| |

| Booster | get_booster (self) |

| |

| "XGBModel" | set_params (self, **Any params) |

| |

| Dict[str, Any] | get_params (self, bool deep=True) |

| |

| Dict[str, Any] | get_xgb_params (self) |

| |

| int | get_num_boosting_rounds (self) |

| |

|

None | save_model (self, Union[str, os.PathLike] fname) |

| |

|

None | load_model (self, ModelIn fname) |

| |

| Dict[str, Dict[str, List[float]]] | evals_result (self) |

| |

| int | n_features_in_ (self) |

| |

| np.ndarray | feature_names_in_ (self) |

| |

| float | best_score (self) |

| |

| int | best_iteration (self) |

| |

| np.ndarray | feature_importances_ (self) |

| |

| np.ndarray | coef_ (self) |

| |

| np.ndarray | intercept_ (self) |

| |

|

|

"DaskXGBClassifier" | _fit_async (self, _DataT X, _DaskCollection y, Optional[_DaskCollection] sample_weight, Optional[_DaskCollection] base_margin, Optional[Sequence[Tuple[_DaskCollection, _DaskCollection]]] eval_set, Optional[Union[str, Sequence[str], Metric]] eval_metric, Optional[Sequence[_DaskCollection]] sample_weight_eval_set, Optional[Sequence[_DaskCollection]] base_margin_eval_set, Optional[int] early_stopping_rounds, Union[int, bool] verbose, Optional[Union[Booster, XGBModel]] xgb_model, Optional[_DaskCollection] feature_weights, Optional[Sequence[TrainingCallback]] callbacks) |

| |

|

_DaskCollection | _predict_proba_async (self, _DataT X, bool validate_features, Optional[_DaskCollection] base_margin, Optional[Tuple[int, int]] iteration_range) |

| |

| _DaskCollection | _predict_async (self, _DataT data, bool output_margin, bool validate_features, Optional[_DaskCollection] base_margin, Optional[Tuple[int, int]] iteration_range) |

| |

|

Any | _apply_async (self, _DataT X, Optional[Tuple[int, int]] iteration_range=None) |

| |

| Any | _client_sync (self, Callable func, **Any kwargs) |

| |

| Dict[str, bool] | _more_tags (self) |

| |

|

str | _get_type (self) |

| |

| None | _load_model_attributes (self, dict config) |

| |

| Tuple[ Optional[Union[Booster, str, "XGBModel"]], Optional[Metric], Dict[str, Any], Optional[int], Optional[Sequence[TrainingCallback]],] | _configure_fit (self, Optional[Union[Booster, "XGBModel", str]] booster, Optional[Union[Callable, str, Sequence[str]]] eval_metric, Dict[str, Any] params, Optional[int] early_stopping_rounds, Optional[Sequence[TrainingCallback]] callbacks) |

| |

|

DMatrix | _create_dmatrix (self, Optional[DMatrix] ref, **Any kwargs) |

| |

|

None | _set_evaluation_result (self, TrainingCallback.EvalsLog evals_result) |

| |

|

bool | _can_use_inplace_predict (self) |

| |

|

Tuple[int, int] | _get_iteration_range (self, Optional[Tuple[int, int]] iteration_range) |

| |

| "DaskXGBClassifier" xgboost.dask.DaskXGBClassifier.fit |

( |

|

self, |

|

|

_DataT |

X, |

|

|

_DaskCollection |

y, |

|

|

*Optional[_DaskCollection] |

sample_weight = None, |

|

|

Optional[_DaskCollection] |

base_margin = None, |

|

|

Optional[Sequence[Tuple[_DaskCollection, _DaskCollection]]] |

eval_set = None, |

|

|

Optional[Union[str, Sequence[str], Callable]] |

eval_metric = None, |

|

|

Optional[int] |

early_stopping_rounds = None, |

|

|

Union[int, bool] |

verbose = True, |

|

|

Optional[Union[Booster, XGBModel]] |

xgb_model = None, |

|

|

Optional[Sequence[_DaskCollection]] |

sample_weight_eval_set = None, |

|

|

Optional[Sequence[_DaskCollection]] |

base_margin_eval_set = None, |

|

|

Optional[_DaskCollection] |

feature_weights = None, |

|

|

Optional[Sequence[TrainingCallback]] |

callbacks = None |

|

) |

| |

Fit gradient boosting model.

Note that calling ``fit()`` multiple times will cause the model object to be

re-fit from scratch. To resume training from a previous checkpoint, explicitly

pass ``xgb_model`` argument.

Parameters

----------

X :

Feature matrix. See :ref:`py-data` for a list of supported types.

When the ``tree_method`` is set to ``hist``, internally, the

:py:class:`QuantileDMatrix` will be used instead of the :py:class:`DMatrix`

for conserving memory. However, this has performance implications when the

device of input data is not matched with algorithm. For instance, if the

input is a numpy array on CPU but ``cuda`` is used for training, then the

data is first processed on CPU then transferred to GPU.

y :

Labels

sample_weight :

instance weights

base_margin :

global bias for each instance.

eval_set :

A list of (X, y) tuple pairs to use as validation sets, for which

metrics will be computed.

Validation metrics will help us track the performance of the model.

eval_metric : str, list of str, or callable, optional

.. deprecated:: 1.6.0

Use `eval_metric` in :py:meth:`__init__` or :py:meth:`set_params` instead.

early_stopping_rounds : int

.. deprecated:: 1.6.0

Use `early_stopping_rounds` in :py:meth:`__init__` or :py:meth:`set_params`

instead.

verbose :

If `verbose` is True and an evaluation set is used, the evaluation metric

measured on the validation set is printed to stdout at each boosting stage.

If `verbose` is an integer, the evaluation metric is printed at each

`verbose` boosting stage. The last boosting stage / the boosting stage found

by using `early_stopping_rounds` is also printed.

xgb_model :

file name of stored XGBoost model or 'Booster' instance XGBoost model to be

loaded before training (allows training continuation).

sample_weight_eval_set :

A list of the form [L_1, L_2, ..., L_n], where each L_i is an array like

object storing instance weights for the i-th validation set.

base_margin_eval_set :

A list of the form [M_1, M_2, ..., M_n], where each M_i is an array like

object storing base margin for the i-th validation set.

feature_weights :

Weight for each feature, defines the probability of each feature being

selected when colsample is being used. All values must be greater than 0,

otherwise a `ValueError` is thrown.

callbacks :

.. deprecated:: 1.6.0

Use `callbacks` in :py:meth:`__init__` or :py:meth:`set_params` instead.

Reimplemented from xgboost.sklearn.XGBModel.

Reimplemented in xgboost.dask.DaskXGBRFClassifier.

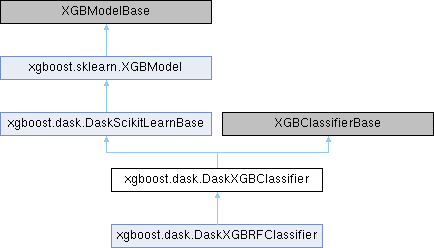

Public Member Functions inherited from xgboost.dask.DaskScikitLearnBase

Public Member Functions inherited from xgboost.dask.DaskScikitLearnBase Public Member Functions inherited from xgboost.sklearn.XGBModel

Public Member Functions inherited from xgboost.sklearn.XGBModel Data Fields inherited from xgboost.dask.DaskScikitLearnBase

Data Fields inherited from xgboost.dask.DaskScikitLearnBase Data Fields inherited from xgboost.sklearn.XGBModel

Data Fields inherited from xgboost.sklearn.XGBModel Protected Member Functions inherited from xgboost.dask.DaskScikitLearnBase

Protected Member Functions inherited from xgboost.dask.DaskScikitLearnBase Protected Member Functions inherited from xgboost.sklearn.XGBModel

Protected Member Functions inherited from xgboost.sklearn.XGBModel Protected Attributes inherited from xgboost.dask.DaskScikitLearnBase

Protected Attributes inherited from xgboost.dask.DaskScikitLearnBase Protected Attributes inherited from xgboost.sklearn.XGBModel

Protected Attributes inherited from xgboost.sklearn.XGBModel Static Protected Attributes inherited from xgboost.dask.DaskScikitLearnBase

Static Protected Attributes inherited from xgboost.dask.DaskScikitLearnBase